Mapping Texts: Our Idea

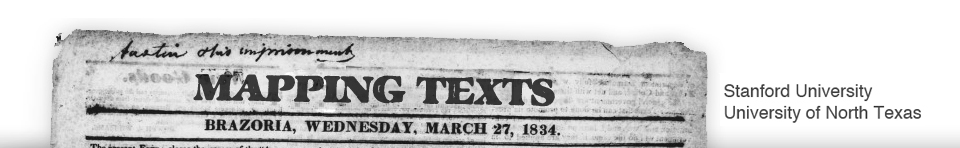

Text Mapping is a collaboration between the University of North Texas and Stanford University with a pretty simple mission: experiment with new methods for finding and analyzing meaningful patterns embedded within massive collections of historical newspapers.

IDEA BEHIND THE PROJECT

Why do we think this is important? Because, quite simply, historical newspapers are currently being digitized at a scale that is rapidly overwhelming our traditional methods of research. The Chronicling America project (a joint endeavor of the National Endowment for the Humanities and the Library of Congress), for example, recently digitized its one millionth historical newspaper page, and they will soon make millions more freely available online.

What can scholars do with such an immense wealth of information? Currently, they cannot do much. Without tools and methods capable of handling such large datasets—and thus sifting out meaningful patterns embedded within them—scholars typically find themselves confined to performing only basic word searches across enormous collections. While such basic searches can, indeed, find stray information scattered in unlikely places, they becoming increasingly less useful as datasets continue to grow in size. If a search for a particular term yields 4,000,000 results, even those search results produce a dataset far too large for any single scholar to analyze in a meaningful way using traditional methods.

Our goal, then, is to help solve this problem by combining the two most promising methods for finding meaning in such massive collections of historical newspapers: text-mining and visualization.

THE NEWSPAPERS

For this project, we are experimenting on a collection of about 232,500 pages of historical newspapers digitized by the Texas Digital Newspaper Program at the University of North Texas Library. These newspapers were digitized in conjunction with the Chronicling America project, as well as under UNT’s own digital newspaper program, and were selected because:

- With nearly a quarter million pages, we could experiment with scale.

- The newspapers were all digitized according to the standards set by the national Chronicling America project, providing a uniform sample.

- The Texas orientation of all the newspapers gave us a consistent geography for our visualization experiments.

BUILDING PROTOTYPES

And so we have been experimenting with mining language patterns and mapping the results. We are currently working on a series of prototypes of what this might look like, which we will be releasing on this site as we develop them. These prototypes consist of visualizations that build on top of text- and data-mining that we are doing with the newspaper collection.

Our first prototype, which is nearly ready for initial release, examines the quantity and quality of information available in our newspaper collection as it spread out across both time and space. Future prototypes will attempt to answer specific research questions using the collection.

THE PARTNERSHIP

The project relies on two teams, one at the University of North Texas and one at Stanford’s Bill Lane Center for the American West, that each bring unique skills to the project. At UNT, we have expertise in the historical content and a particularly talented team of computer scientists specializing in natural language processing for the text-mining side of the project. At Stanford’s Lane Center, we have a team deeply skilled in both complex historical visualizations and spatial mapping. (For more detail on the folks behind the project, see the People section.)

Between the two teams, it seemed to us, we have a unique opportunity to conduct experiments in what might be possible though text-mining and visualizing a large collection of historical newspapers.

- Mapping Texts is a collaboration between scholars, staff and students at Stanford University and the University of North Texas. It is supported by the National Endowment for the Humanities.

Project Partners

Inside

Categories

- Historical Topics (1)

- Text Mining (1)

- Uncategorized (1)

- Visualizations (2)